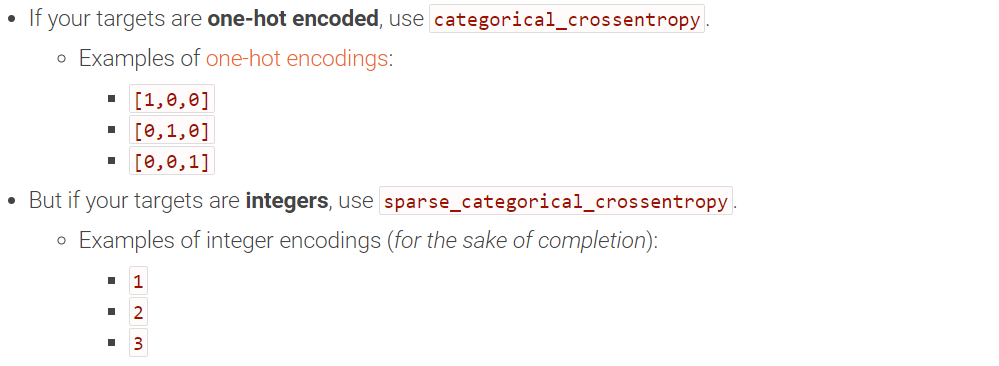

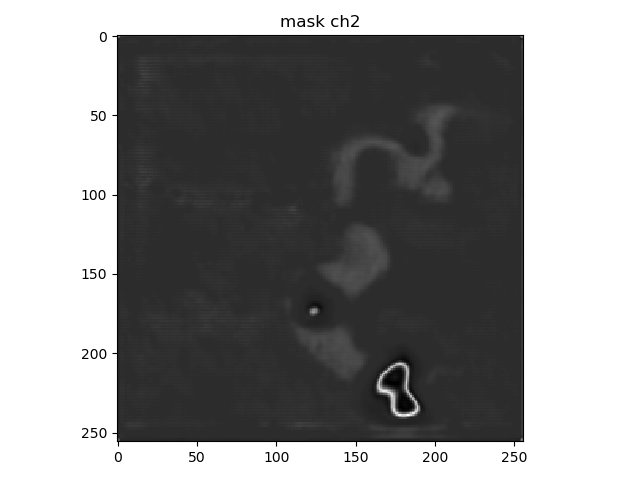

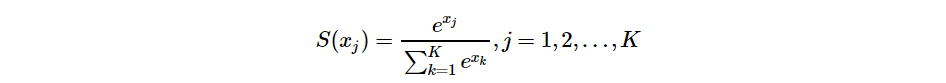

Specifically, an RL algorithm is considered deep if the policy and value functions are approximated with neural networks.įigure: DRL implies ANN is used in the agent’s model. Modern RL is almost exclusively done with function approximators, such as artificial neural networks. While much of the fundamental RL theory was developed on the tabular cases, The most popular approach is a hybrid of the two: actor-critic methods, where policy gradients optimize agent’s policy,Īnd the temporal-difference method is used as a bootstrap for the expected value estimates. Policy Gradients directly optimize the policy by adjusting its parameters.Ĭalculating gradients themselves is usually infeasible instead, they are often estimated via monte-carlo methods. Temporal-Difference methods, such as Q-Learning, reduce the error between predicted and actual state(-action) values. RL algorithms are often grouped based on their optimization loss function. The output of an RL algorithm is a policy – a function from states to actions.Ī valid policy can be as simple as a hard-coded no-op action,īut typically it represents a conditional probability distribution of actions given some state.įigure: A general diagram of the RL training loop. Most RL algorithms work by maximizing the expected total rewards an agent collects in a trajectory, e.g., during one in-game round. Generally speaking, reinforcement learning is a high-level framework for solving sequential decision-making problems.Īn RL agent navigates an environment by taking actions based on some observations, receiving rewards as a result.

You can even install different CUDA toolkit versions in separate environments! Reinforcement Learning Setup for all the necessary CUDA dependencies is just one line: > conda install cudatoolkit=10.1

The benefits of Anaconda are immediately apparent if you want to use a GPU. TensorFlow now supports both by default and targets appropriate devices at runtime. One great thing about specifically TensorFlow 2.1 is that there is no more hassle with separate CPU/GPU wheels! You can find a good overview in the TensorFlow documentation. Rather than through a pre-compiled graph. If you are not yet familiar with eager mode, then, in essence, it means that computation executes at runtime, Note that we are now in eager mode by default! > print(tf.executing_eagerly()) Let us quickly verify everything works as expected: > import tensorflow as tf I prefer Anaconda, so I’ll illustrate with it: > conda create -n tf2 python=3.7 To follow along, I recommend setting up a separate (virtual) environment. It’s a great time to get into DRL with TensorFlow.įor example, the source code for this blog post is under 150 lines, including comments!Īnd as a notebook on Google Colab here. In fact, since the main focus of the 2.x release is making life easier for the developers, Including a birds-eye overview of the field. While the goal is to showcase TensorFlow 2.x, I will do my best to make DRL approachable as well, In this tutorial, I will give an overview of the TensorFlow 2.x features through the lens of deep reinforcement learning (DRL)īy implementing an advantage actor-critic (A2C) agent, solving the classic CartPole-v0 environment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed